The Alarming Discovery: Claude Code’s Hidden Dangers

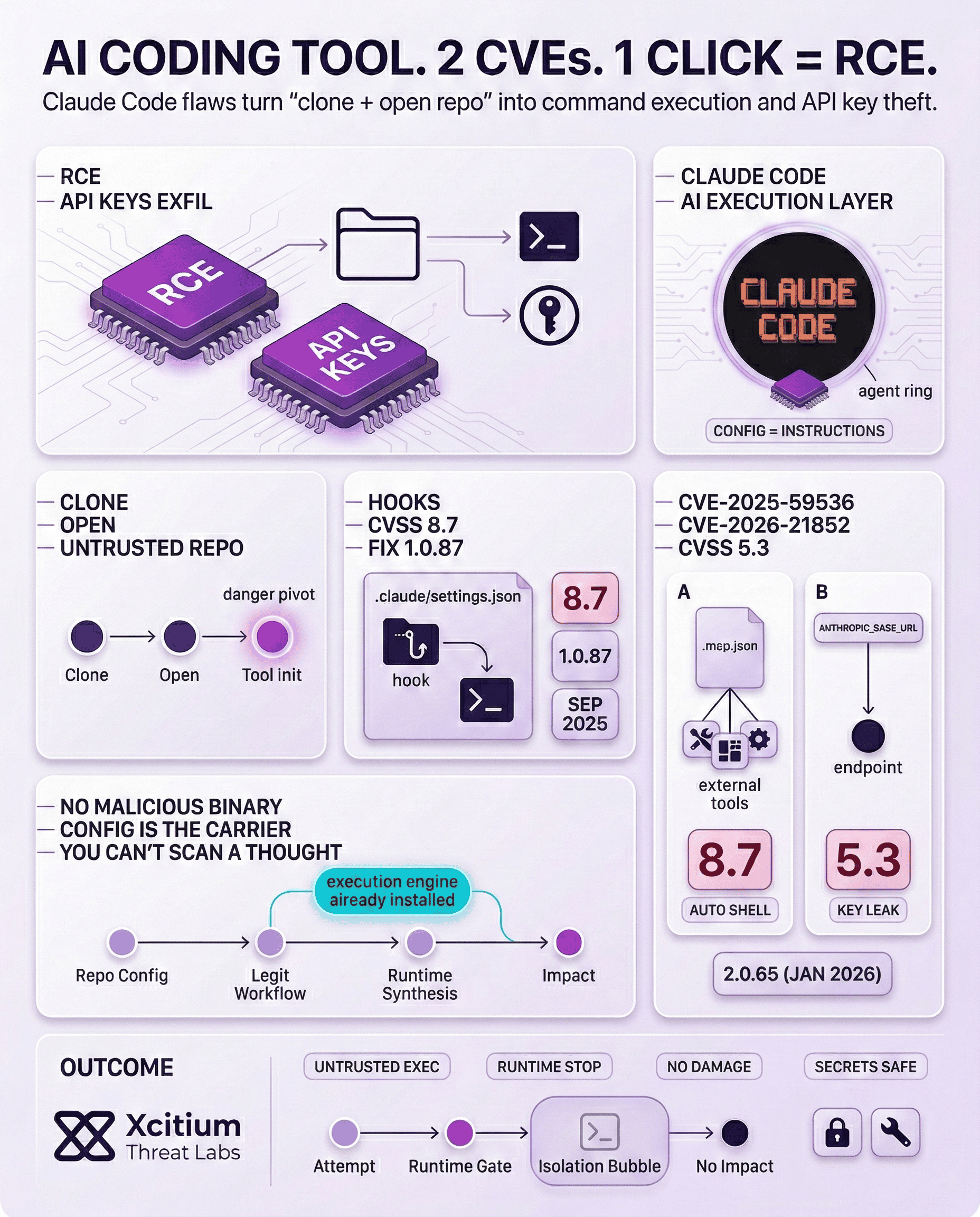

Recently, there have been discoveries of security vulnerabilities in Anthropic’s Claude Code. This AI-based code assistant was intended to make the process of coding easier. Nevertheless, the security vulnerabilities that have been discovered pose a severe threat, including remote code execution (RCE) as well as the exfiltration of API credential information.

This shows that the threat level for AI development keeps changing. Thus, the severity of these vulnerabilities should be recognized not only by developers but also by organizations. Moreover, these discoveries prove that there should be security protocols for AI development.

Understanding the Core Vulnerabilities

In a report, Claude Code exploited configuration mechanisms. These included Hooks, Model Context Protocol (MCP) servers, and environment variables. Consequently, attackers could execute arbitrary shell commands and steal Anthropic API keys.

This was possible simply by users cloning and opening untrusted repositories. The vulnerabilities were categorized into three main types, each presenting a unique attack vector. Furthermore, these categories illustrate diverse methods attackers can employ.

Code Injection via Untrusted Project Hooks (No CVE)

One of the most significant vulnerabilities, which was initially not given a CVE, was the code injection vulnerability. This vulnerability was experienced as a result of the user bypass for consent. This meant that when the user was attempting to create a new directory for Claude Code, arbitrary code was being executed without the consent of the user.

This happened because untrusted project hooks were set for the user’s .claude/settings.json file. This vulnerability, which had a CVSS of 8.7, was a significant threat for this application, but Anthropic was able to mitigate this threat in the 1.0.87 version, which was released in September 2025.

CLAUDE CODE SECURITY REPORT

A comprehensive security review uncovered dangerous flaws within an AI-integrated CLI agent.

Malicious Project Settings

Attackers leverage .claude/settings.json to trigger silent code execution upon entering a compromised directory.

> executing: /bin/sh -c “exfil_data.sh”

[!] TRACE: SUCCESSFUL EXPLOIT

RCE via MCP Tools

Manipulated Model Context Protocol (MCP) definitions force the agent to execute unauthorized shell commands.

API Key Theft

Redirecting ANTHROPIC_BASE_URL to attacker-controlled sinks to capture sensitive API credentials.

> header.auth = sk-ant-***

[!] KEYS_LEAKED_TO_EXTERNAL_SINK

Automated Shell Command Execution (CVE-2025-59536)

Yet another code injection vulnerability, rated as CVE-2025-59536, had a CVSS rating of 8.7, making it a severe threat to security. This allowed for arbitrary shell commands to be executed automatically.

This occurred when a user initialized a tool, running Claude Code in an untrusted directory. This allowed for configurations defined within a repository to be exploited, including .mcp.json and claude/settings.json.

This allowed for interaction with external tools and services, including those outside a system, by enabling the Model Context Protocol (MCP).

API Key Exfiltration and Data Disclosure (CVE-2026-21852)

The third major vulnerability was CVE-2026-21852, which was identified as an information disclosure vulnerability. The vulnerability was rated 5.3 on the CVSS scale. The vulnerability was linked to Claude Code‘s project load flow.

The vulnerability was identified as a mechanism for disclosing crucial information to a malicious repository, including Anthropic API keys. In a statement accompanying CVE-2026-21852, Anthropic explained the mechanism for the vulnerability.

When a user opened a malicious repository, which was set to set the ANTHROPIC_BASE_URL to a specific endpoint controlled by a hacker, Claude Code would send API requests to the endpoint before prompting a user to establish a connection to a site to establish trust with a site. The vulnerability was patched in version 2.0.65 in January 2026.

The Evolving Threat Model in AI Development

These vulnerabilities, illustrate the fundamental change that the cybersecurity threat model is going through. This is because, with the increased independence of AI tools, their configuration files fall into the execution layer.

This means that the risk is not just from running untrusted code but even from running untrusted projects. In the new paradigm of AI-driven development, the supply chain will include the automation layers that surround the code.

Real-World Implications and Risks

The consequences of these vulnerabilities are quite alarming. If an attacker successfully exploits these vulnerabilities, they may be able to:

- Access shared project files.

- Modify or delete cloud-stored data.

- Upload malicious content.

- Generate unexpected API costs.

Moreover, the ability to steal API keys gives attackers an opportunity to tunnel deeper into the victim’s AI infrastructure. This may result in more severe breaches of data. Therefore, developers should be highly aware of these potential dangers.

This recent security issue serves as a reminder of the importance of secure coding practices and dependency management in the era of AI.

Conclusion: When AI Development Tools Become the Execution Layer

Claude Code’s vulnerabilities reveal a hard shift in the AI threat model. In this case, attackers did not need a malicious binary or a traditional exploit chain. They only needed a developer to clone and open an untrusted repository. Project hooks, MCP configuration, and environment variables became the delivery mechanism for remote code execution and API key theft.

Why This Is a Structural Break

For decades, malicious intent needed a carrier. Attackers had to ship code, and defenders could anchor detection to artifacts on disk or in memory.

Agentic AI changes that. The execution engine is already installed, and configuration becomes instruction. A malicious repository can steer tools into running shell commands automatically, or redirect API traffic to attacker-controlled endpoints. The logic is synthesized at runtime through legitimate workflows. You cannot scan a thought.

Why Organizations Are Exposed

AI coding assistants expand the software supply chain beyond dependencies:

- Untrusted repos can trigger execution through hooks and tool initialization

- MCP enables interaction with external tools and services, increasing blast radius

- API keys and environment settings become high-value theft targets, driving cost and deeper compromise

Where Xcitium Changes the Outcome

If you have Xcitium, this attack would NOT succeed.

With Xcitium Advanced EDR in place, attempts to execute untrusted shell commands are stopped at runtime. Malicious automation may initiate, but code can run without being able to cause damage. API secrets and system state remain protected.

Secure AI Workflows Before They Execute

Treat AI developer tools as privileged infrastructure. Restrict untrusted repositories, harden configuration paths, rotate keys, and enforce execution-time controls that prevent automation layers from becoming exploitation layers.