Generative AI is moving into operations. Recently, reporting around Operation Epic Fury said the United States military used Anthropic’s Claude tools during strikes, even as federal leaders moved to sever ties with the vendor. Once a model is embedded, politics can’t unplug it overnight.

Claude in Operation Epic Fury: What’s Confirmed and What’s Still Unknown

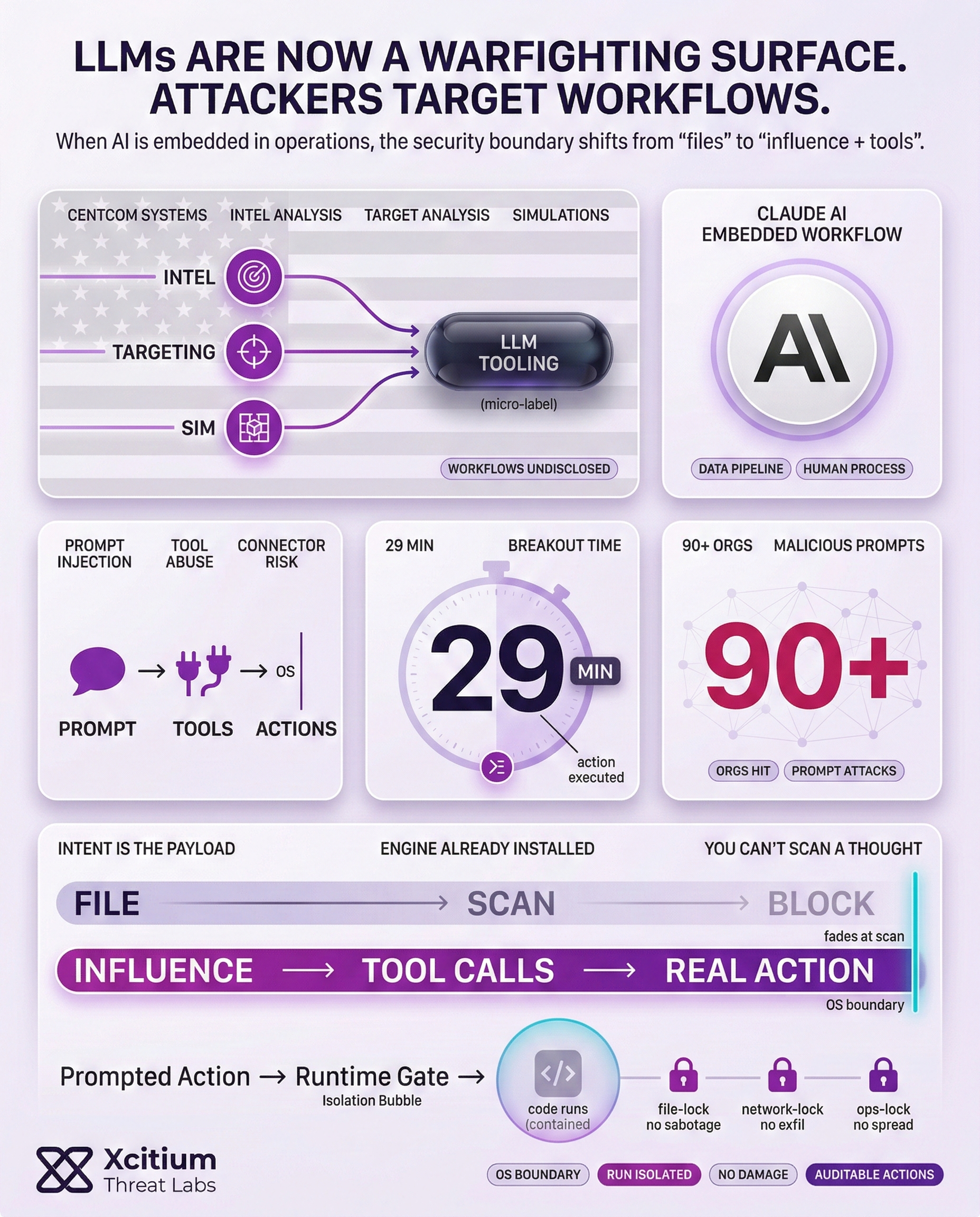

It has been reported that the Pentagon made use of Anthropic AI services, including the Claude tools, according to a source familiar with the matter. However, it was not possible to determine the manner in which the tools were utilized. According to reports, the alleged use cases included intelligence analysis, target analysis, and simulations associated with systems operated by the U.S. Central Command.

Some of the key takeaways regarding the use of the Claude tool include the following:

- The tool was reportedly utilized during the operation.

- The use continued even after the attempted policy cutoff.

- Workflows, inputs, and oversight remain undisclosed.

Why “Just Turn It Off” Doesn’t Work in Classified Environments

Defense IT models are not standalone programs. Instead, they reside within an accredited network, data pipeline, and human process. Hence, an executive order might not necessarily translate into the actual technical reality.

It was mentioned that replacing Claude on classified networks might take months due to the process of rerouting data and re-approving the replacement process. It is interesting to note that the Department of Defense’s ethical AI principles emphasize the concept of “governable” systems, which include the ability to disengage/deactivate systems showing unintended behavior.

Guardrails vs “All Lawful Use”: The Contract Problem Behind The Drama

Anthropic says it sought two narrow exceptions: mass domestic surveillance of Americans and fully autonomous weapons. Multiple reports described internal pressure within the Pentagon for broader “all lawful use,” alongside references to potential measures such as designating the matter a “supply chain risk” or invoking the Defense Production Act. Under U.S. law, supply-chain-risk procurement actions are tied to protecting national security systems from sabotage, compromise, or subversion.

Privacy concerns sit underneath that language. The Office of the Director of National Intelligence has warned that commercially available data, combined with powerful analytics, can raise significant privacy and civil-liberties concerns even when each dataset looks non-sensitive on its own.

The Cybersecurity Angle: LLMs Are a New Attack Surface

When an LLM is exposed to sensitive workflows, an attacker can target the LLM just like other critical systems. The OWASP Foundation Top 10 for LLM Applications lists prompt injection as a major concern for LLM applications, which can affect the output and decisions made by the LLM. Additionally, there is an increased chance of supply chain attacks via plugins/connectors and data sources.

The trends are already being observed by attackers. A report on the global threat analysis for 2026 states that attackers used malicious prompts against GenAI tools in over 90 organizations, while the average breakout time was 29 minutes in 2025. A security-by-design approach is needed for GenAI systems from the very beginning.

Controls that scale:

- Limit model-accessible data and tool permissions.

- Log prompts, outputs, and actions for audits.

- Red-team prompt injection and data leakage.

- Keep a fallback mode and vendor exit plan.

Conclusion: When AI Is Embedded, Policy Cannot Unplug It

The reported use of Claude during Operation Epic Fury highlights a strategic reality. Once an AI model is integrated into classified workflows, it becomes part of an accredited network, a data pipeline, and a human process. A policy directive can signal intent, but replacing a deployed model can take months due to rerouting data and re-validating systems.

Why This Is a Structural Security Shift

For decades, malicious intent needed a carrier. Attackers had to ship code, and defenders could anchor detection to artifacts. AI changes that model. The execution engine is already installed, and attackers can influence outcomes through prompt injection, tool abuse, connector compromise, or poisoned data sources. The malicious component becomes intent and instruction, not a stable file. You cannot scan a thought.

Why Oversight and Privacy Become the Center of Gravity

The contract dispute and supply chain language point to the same underlying risk. Aggregating commercially available data with powerful analytics can create surveillance capability at scale, even when each dataset appears non-sensitive. At the same time, “all lawful use” pressures collide with governability requirements, including the ability to disengage systems that behave unexpectedly.

Where Xcitium Changes the Outcome

LLMs are now a new attack surface. When an LLM is embedded into sensitive workflows, attackers can target it like any other critical system. Prompt injection is a primary risk for LLM applications, and supply chain exposure increases through plugins, connectors, and external data sources.

Xcitium’s answer is to make the execution layer safe, even when the decision layer is influenced.

With Xcitium Advanced EDR in place, AI driven tool actions are enforced at the OS boundary. Malicious prompts can try to steer outcomes, but code can run without being able to cause damage. That is the control point when instructions are dynamic and you cannot scan a thought.

Secure AI Like Critical Infrastructure

AI in operations demands security by design. Limit tool permissions and model accessible data, audit prompts and actions, red-team for prompt injection, maintain fallback modes, and build a realistic vendor exit path. Then enforce architectural controls that keep execution safe when intent is the attack surface.