Why Live Chat Phishing is a Growing Threat

Attackers are constantly finding new ways to make phishing more convincing. Over the past two years, a 148% surge in impersonation scams has been reported in identity theft cases. That’s largely due to advances in AI and the ubiquity of real-time chat tools. Phishers now turn to SaaS support platforms (like LiveChat) and collaboration apps (like Teams) to impersonate trusted customer service agents.

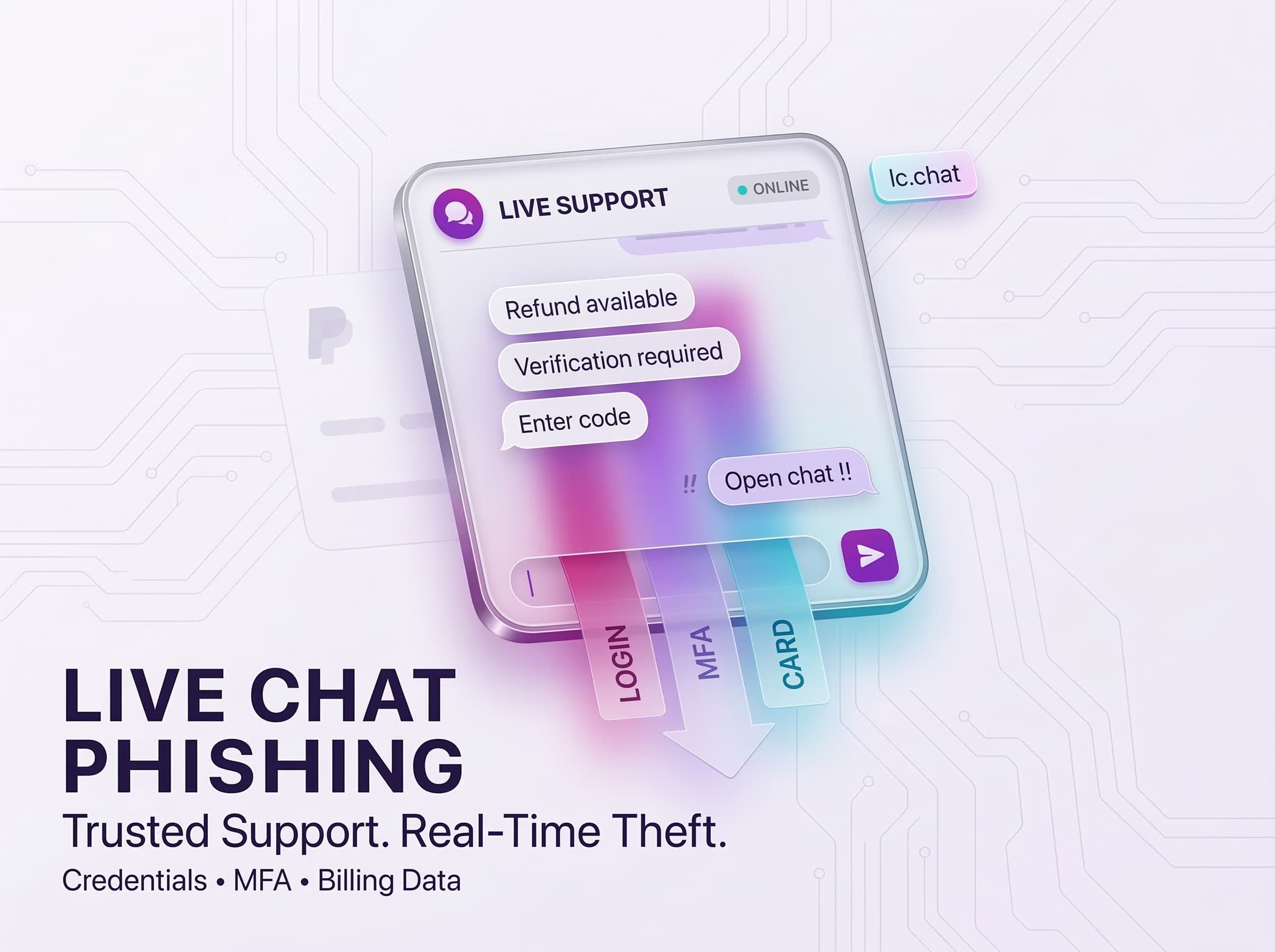

Attack in Action: LiveChat Used to Steal Credentials

Cybercriminals executed a two-pronged phishing campaign using LiveChat’s platform. Each email sent a victim to a branded support chat, but via a malicious link. Both lures triggered a LiveChat window hosted under lc.chat (LiveChat’s domain). From there, a human impersonator or bot grabbed sensitive data in real time.

- PayPal Refund Scam: The victim receives a spoofed PayPal email claiming they’re due a $200 refund. Clicking the “View Transaction Details” button opens a LiveChat window styled like PayPal support. The live chat then directs the user to a fake PayPal login page. After the victim enters credentials, the attacker intercepts the phone’s MFA code to bypass two-factor protection. Finally, additional forms collect the user’s billing info (even date of birth) and full credit-card details (number, expiry, CVC) under the guise of “security verification”.

Despite careful branding and a polite chat tone, each of these scenarios was simply a human-led scam to steal data. In one case the fraudster even assured the user that a refund would be received after the chat, keeping the victim talking on the platform.

Why It Works: The Human Touch in Phishing

These Live Chat attacks work because of the sense of real-time support. Victims think they’re interacting with a helpful representative, and that sense of security removes their usual wariness. The face-to-face aspect of the conversation, even if it’s online, helps build that sense of trust. And then there’s the sense of urgency, perhaps from a pending refund. In short, attackers use strong psychological cues: urgent lures + trusted brand impersonation + personal interaction = very effective scams.

- Trusted Branding: Using logos and site styling of big names immediately tricks people into thinking the chat is legitimate. It’s hard to question a support rep who appears authentic.

- Urgency and Reward: The promise of money (refund) or resolving a problem creates emotional pressure. This tactic preys on curiosity and fear people want to act fast to “get their money” or fix an issue.

- Reduced Scrutiny: In a quick chat, users don’t have time to analyze details as they would in an email. They’re focused on the conversation flow. As one report puts it, personal interaction “reduces the victim’s caution and increases the chance of successful credential and data theft”.

- Human Factor: Human element is present in about 60% of breaches, and phishing remains the top cause of compromised credentials. In this case, the scammers were actual humans responding to each query, complete with spelling errors. That rough, casual language mistakes like “Open chat !!” is counterintuitively a signal of real social engineering rather than a cheap bot, making the victim less alert.

Because standard anti-phishing tools often rely on scanning for malicious links or domains, a live chat interface can slip under the radar. There’s no classic suspicious attachment, and the interaction is hosted on a legitimate service. Thus, even vigilant users and automated filters struggle to flag it.

Beyond LiveChat: Chat and Collaboration Under Siege

Phishers are increasingly using popular and trusted messenger services. A recent phishing attack using Microsoft Teams involved fake invitations that resembled urgent billing notifications. These notifications tricked the users into making calls to the attackers. In this case, more than 12,000 phishing invitations were sent out globally. The attackers used team names such as “Subscription Auto-Pay Notice… Contact support urgently.” The attackers also used subtle spelling variations in the messages in an effort to evade detection. The attackers’ objective was to make the users initiate a phone call based on the familiar pattern of phishing attacks.

Attackers are also using social media direct messages and other messenger services such as WhatsApp and Telegram. They are also using fake SMS chatbots. Any form of real-time communication service has the potential of being abused. With the development of AI and voice cloning technology, attackers are using short audio clips and chatbots to impersonate company representatives.

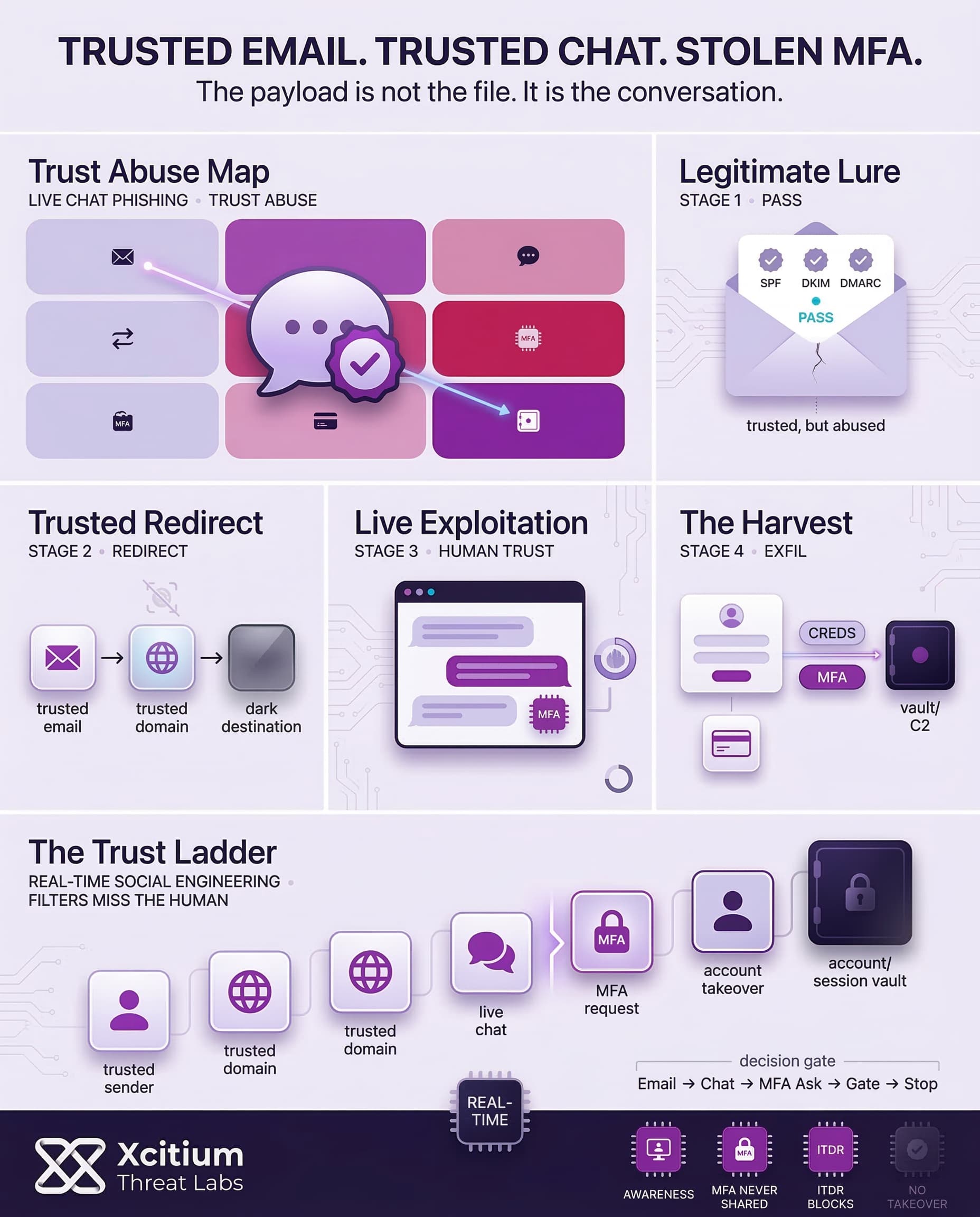

Conclusion: When Trusted Infrastructure Becomes the Phishing Payload

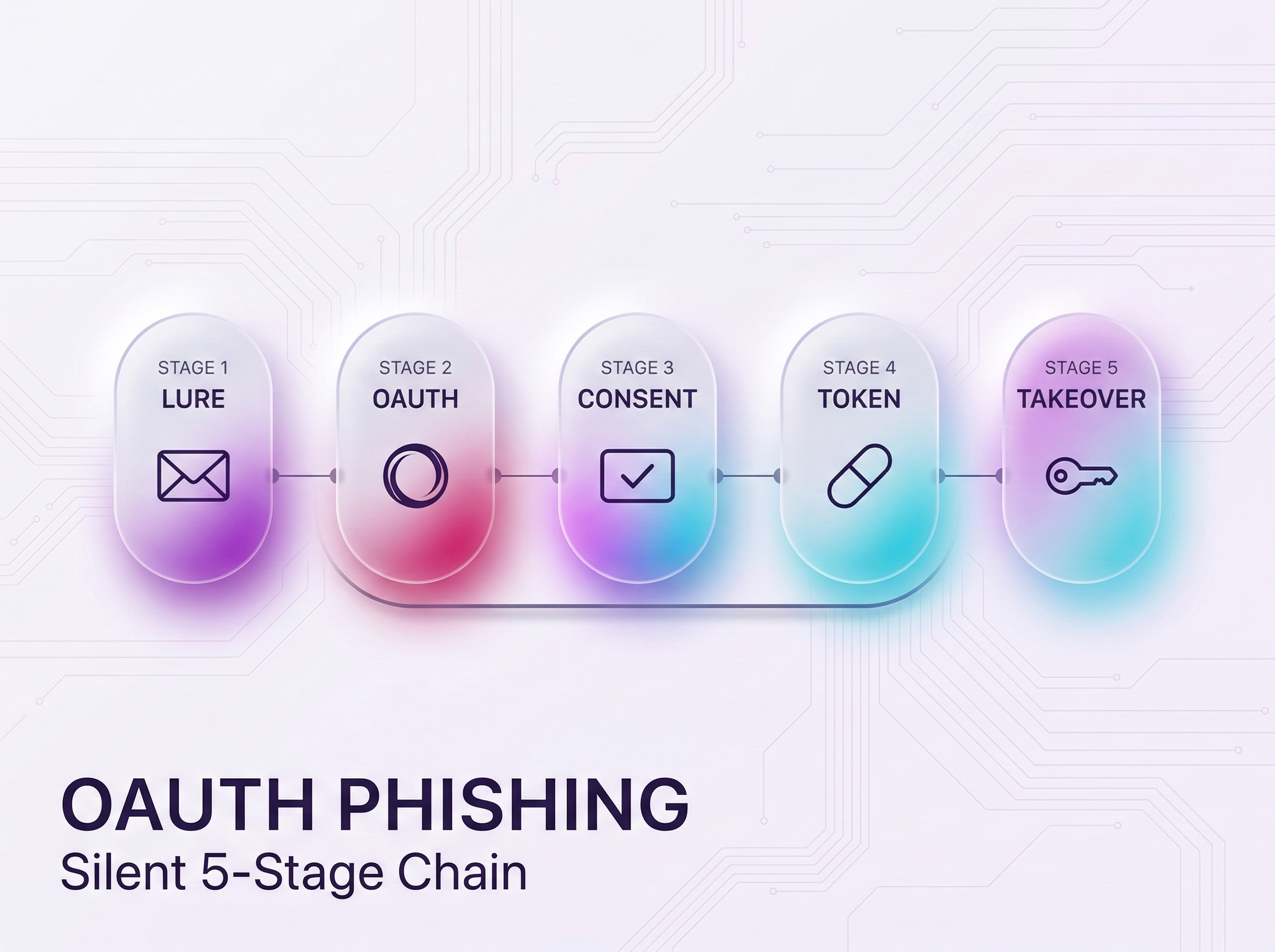

Live chat phishing is not a new channel, it is a new advantage. Attackers abuse high-reputation platforms to deliver emails that pass SPF, DKIM, and DMARC, then route victims into a real-time LiveChat session hosted on a trusted domain like lc.chat. From there, the scam becomes interactive, and the attacker harvests what matters most, credentials, MFA codes, and billing data.

Why This Works So Well

This model succeeds because it removes the signals defenders look for.

- The sender is legitimate infrastructure, so filtering is less likely to trigger.

- The link lands on a trusted support domain, which many environments treat as safe by default.

- A human or bot builds confidence in real time, then asks for the MFA code as “verification.”

The attack is designed to feel like customer service, not crime.

Where Xcitium Changes the Outcome

With Xcitium in place, this attack would NOT succeed.

- Xcitium Cyber Awareness Education and Phishing Simulation trains users to treat refund and support chats as high-risk workflows, and to never share MFA codes, even with someone who appears to be legitimate support.

- Xcitium ITDR helps stop the follow-on phase by detecting abnormal identity behavior and blocking takeover attempts before stolen credentials and codes become persistent access.

The attacker loses because the user does not complete the scam, and identity abuse is stopped if they try.

Protect the Decision Point, Not Just the Inbox

This is not a link problem. It is a trust-abuse problem. Train for real-time manipulation, and monitor identity sessions continuously, because attackers now weaponize legitimate platforms to look authentic.