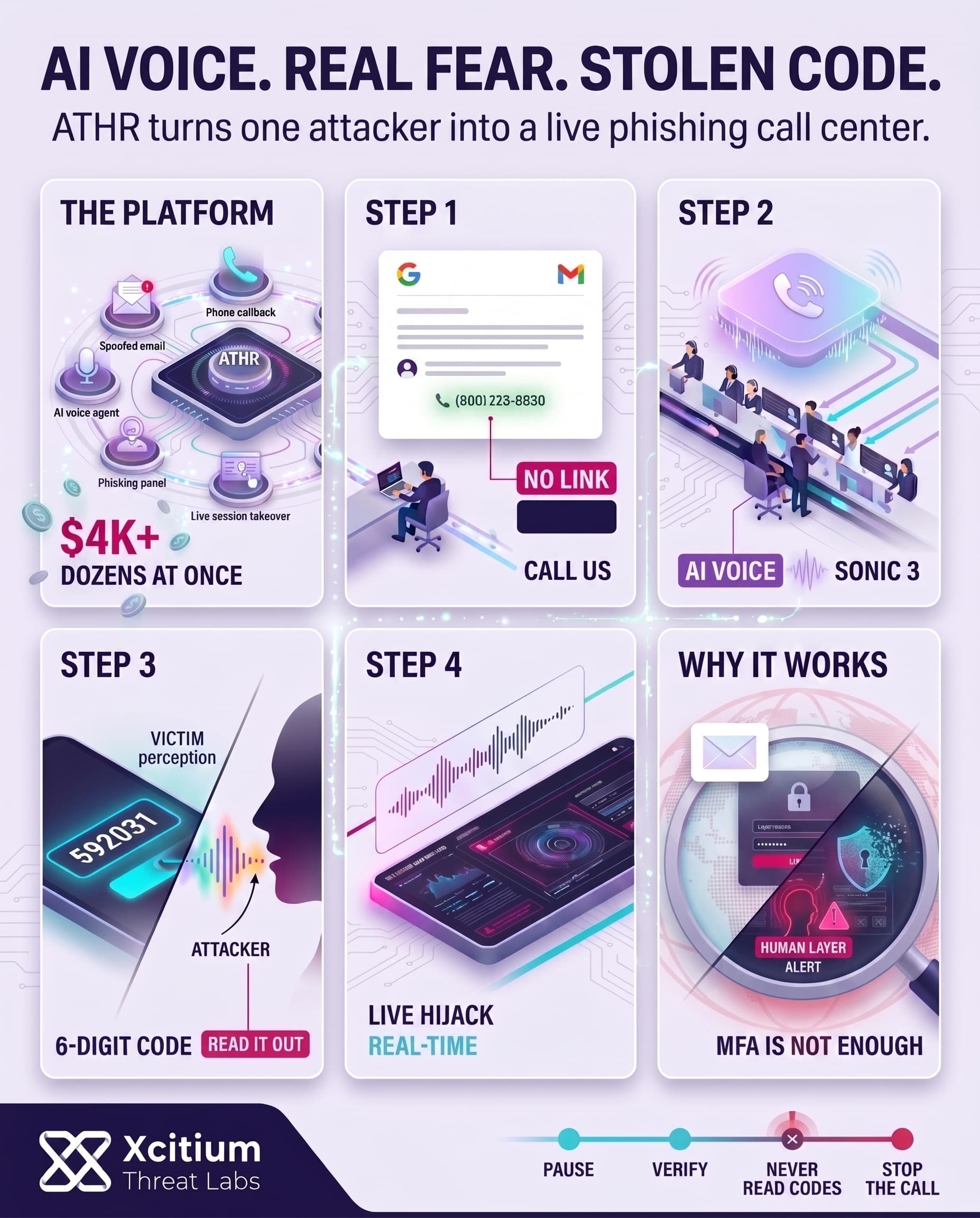

A new threat called ATHR is changing the voice-phishing landscape. This all-in-one platform consolidates email phishing tools, call routing, and credential-stealing panels into a single offering. Criminals can buy the kit for about $4,000 plus a cut of stolen profits.

ATHR provides ready-made email templates (spoofed alerts from Google, Microsoft, Coinbase, etc.) and hides a phone number in each message. When a target calls back, the system routes the call through a browser-based interface (using Asterisk/WebRTC) to either a human scammer or an AI “vishing” agent. This means one operator can fire off dozens of realistic phishing emails and handle dozens of calls in real time, all from a single dashboard.

- Integrated Phishing Kit: Built-in mailer with brand-specific templates and a spoofed “from” field, per-target customization, and technical authentication checks to make emails look legitimate.

- AI-Driven Calls: Web-based phone system that sends callbacks to scripted AI voice agents or trained operators. The AI agents mimic support staff and follow a multi-step scam script.

- Credential Panels: Live phishing pages for eight major services (Google, Microsoft, crypto exchanges, etc.) ready to capture login credentials and one-time codes.

In short, ATHR automates the entire telephone-oriented attack delivery (TOAD) chain. Instead of stitching together separate tools, attackers get a packaged service. They can tweak email alerts with real-looking dates, IPs and locations to make each victim think the message is legitimate.

Automated Attacks: From Phishing Email to AI Call

The attack starts from a seemingly harmless alert email. For instance, it might tell you that your Google account got locked and instructing you to give them a call to unlock it by reaching the provided phone number. Underneath the cover, ATHR’s internal mailer fakes the sender address and provides it with context-specific data (lock time, IP address, etc.) in order to pass spam filters. As a result, the message will be delivered because the email doesn’t contain any malicious links or fails any SPF/DKIM validation checks.

Once the victim dials the specified number, ATHR’s telephony component kicks in. Calls are processed via a browser dashboard interface (just as in regular call centers). After that, calls get either directed to human operators or to AI-based voice agents. As the name suggests, the agents use their speech abilities to establish contact with victims. They perform the dialogue following a pre-written scenario:

- Verify Callback: The agent confirms it’s talking to the right person (e.g. “I see you’re calling about your account”).

- Describe a Problem: It warns of some urgent issue (unusual login attempt, account lockdown, etc.).

- Fake Recovery Process: The agent “walks through” verifying identity and recovering the account.

- Code Extraction: Near the end, the victim is asked to read out a six-digit verification code shown on their screen.

As we see, the code collection is completed when the victim is completely convinced that he is proving his identity to the caller. The thing is that this code allows logging in as this user immediately after it was read out loud. Voice agents in ATHR sound just like regular customer service representatives and their scripts provide many instructions (there are 10 sections in one script). The system even uses its own “Sonic 3” speech synthesis model.

Automation At Scale: Turning One Attacker Into A Call Center

Because it’s AI-driven, ATHR removes the need for skilled human callers. One operator can oversee dozens of AI calls at once, monitoring progress via a live dashboard. That dashboard shows each target’s session, captured form fields, and notes, updating in real time. For each active call, the operator sees the victim’s IP, the phishing page they’re on, and any credentials entered. If needed, the operator can even swap in different credential panels on the fly, steering the conversation from Google’s site to Coinbase’s login page, for instance. Meanwhile, stolen emails and passwords appear in the dashboard seconds after they’re entered.

Overall, ATHR means a single criminal can launch a voice-phishing campaign with minimal effort. What used to require an emailer, a call center, and a credential phishing site is now all in one place. As a result, vishing attacks can scale up rapidly and feel more authentic to victims.

AI Voice Agents: The New Social Engineers

Traditional voice-phishing tactics either involve human perpetrators or pre-recorded audio messages. The AI in ATHR is capable of modifying its script according to the ongoing discussion.

For instance, if the victim asks a question or appears unsure about something, the agent can respond accordingly. This dynamic interaction gives the impression that the call is not rehearsed. Victims are less likely to think that the “support agent” is reading a scam script because they respond to queries and prompts in an intuitive manner.

These characteristics are particularly concerning considering that surveys reveal that 68% of Americans get scam phone calls at least once per week. Furthermore, deepfake voices are already being utilised to steal hundreds of millions. In the infamous 2023 case, a financial professional in the UK received a synthesised voice message from their company CEO and transferred $25 million to the perpetrators. ATHR builds upon this idea by incorporating synthetic voices into live conversation and identity theft.

Notable attributes of ATHR’s AI agents include:

- Professional Tone: Speaks like legitimate tech or bank support, matching the spoofed brand’s style.

- Adaptive Script: Ten detailed steps allow flexibility; for example, if the user resists, the agent might offer additional “verification” steps.

- Multi-brand Capability: Can handle scripts for different companies (Google vs. Coinbase) from the same interface.

- Voice Cloning: Uses advanced text-to-speech (ATHR TTS, “Sonic 3” model) to avoid robotic speech patterns.

Consequently, the AI’s calls are particularly convincing. Unlike conventional robocalls or individual scammers, the voice agents can convey a sense of professionalism, authority, and knowledge of the situation. Preliminary findings indicate that victims typically buy into the fabricated narrative and provide details just as they would for genuine tech support.

Conclusion: When AI Becomes the Voice of the Scam

ATHR shows how voice phishing is evolving from scattered fraud into an industrialized attack model. What once required a spammer, a call center, and multiple phishing tools can now be launched from a single dashboard, with AI voice agents guiding victims through realistic, multi-step conversations until they read out the code that gives attackers access.

Why This Threat Matters Now

This is not just social engineering with better scripts. It is social engineering at scale.

- One operator can supervise dozens of live phishing sessions at once

- AI agents adapt in real time, answer questions, and sound like legitimate support staff

- Emails avoid obvious malicious links, making delivery and trust easier

- The final step is simple, convince the victim to read out the six-digit code on their screen

Why Organizations Are Exposed

ATHR succeeds because it attacks the human layer after technical controls have already done their job.

The email looks routine.

The callback number feels official.

The voice sounds credible.

The victim believes they are proving identity, when in reality they are authorizing compromise.

Where Xcitium Changes the Outcome

If you have Xcitium, this attack would NOT succeed.

With Xcitium Cyber Awareness Education and Phishing Simulation in place:

- Employees learn that real support teams do not ask them to read back live verification codes

- Simulated exercises build pause and verify behavior under pressure

- Brand spoofing, callback lures, and urgent recovery scripts become recognizable patterns

- The attacker loses at the decision point, because the victim never completes the workflow

Stop the Call Before It Becomes Access

ATHR proves that MFA is only as strong as the user standing in front of it. Train for the conversation, not just the click, and make voice-driven social engineering fail before the code is ever spoken.