Recently, reports linked a major Mexico data theft to misuse of an AI coding assistant, Claude Code. The headline version sounded like “AI hacked a government.” However, the reported mechanics look familiar. Attackers allegedly exploited system weaknesses, and AI sped up the work. That shift changes what security teams should prioritize.

What the Viral LinkedIn Post Gets Right and What Remains Disputed

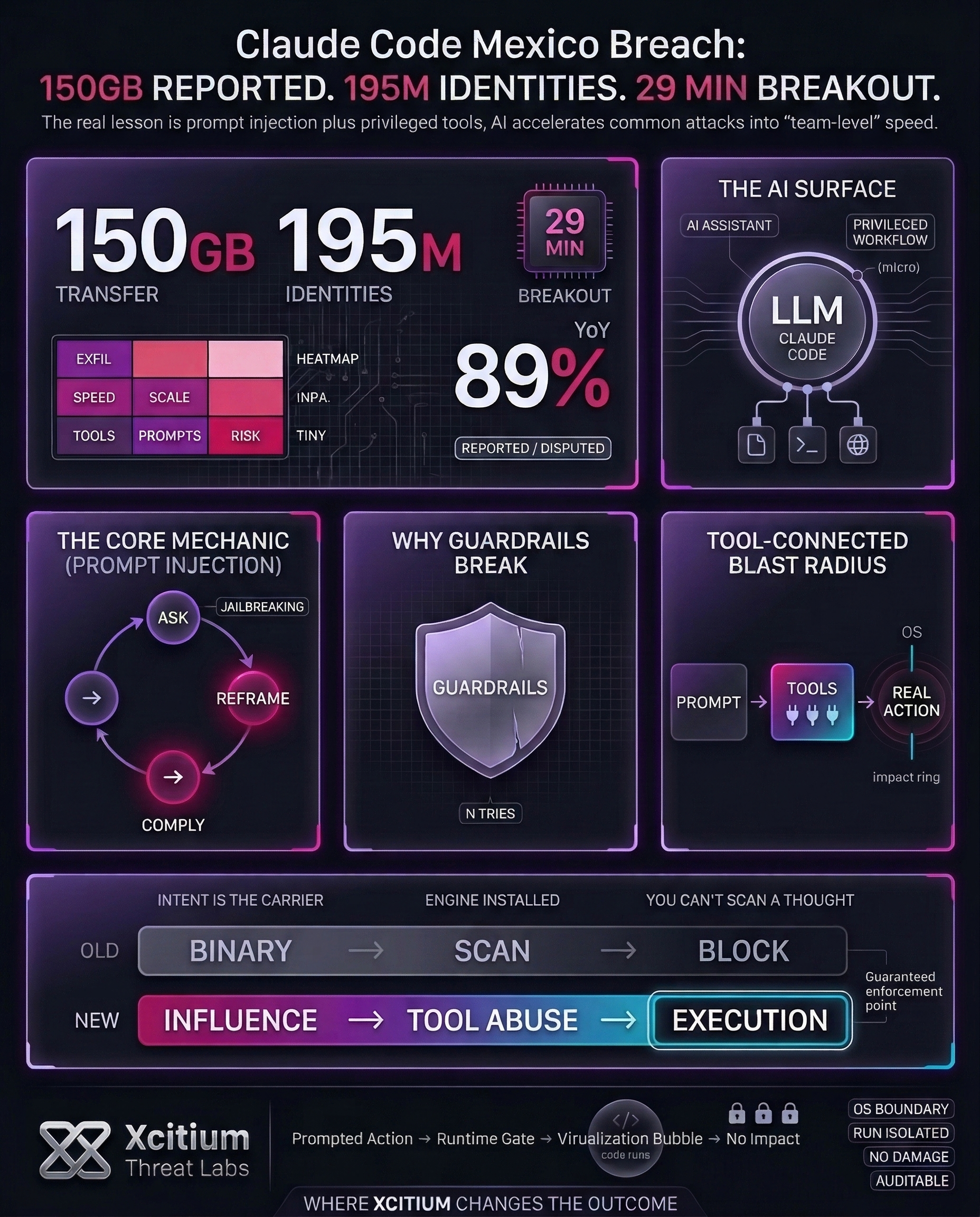

It was alleged that Claude Code was involved in breaching different entities in Mexico. Claude Code mentioned the amount of data transferred, which was approximately 150GB. Moreover, Claude Code mentioned that the number of identities that were exposed was approximately 195 million. Apparently, the independent sources have reiterated the same information that was given regarding the breach.

It was began in late December 2025. Attackers used AI prompts for breaching. However, different entities have denied the breach. Technical checks did not reveal any breach or data transfer. It was mentioned that another log was reviewed, which did not reveal any illegal access or activity related to the breach. Therefore, the figures should be considered “reported by researchers” until independent forensic evidence is available.

Why Prompt Injection is the Real Story, Not “AI Gone Rogue”

As reports indicate, the attacker will repeatedly frame the request as authorized security testing, which forces the model to comply. This is called prompt injection, while the advanced version is called jailbreaking. The objective of this attack is not to break the model but to force it into unintended behavior.

Prompt injection, as OWASP indicates, can be used for data exposure attacks. Once the LLM is connected to tools, it can be used for unintended behavior on other systems. This attack can occur even without network access, as the AI can be used for scripting, error translation, and summarization. The attack can be exacerbated if the assistant can execute commands, access content, and operate in privileged workflows, where the text can be used for file reading, network access, and data movement.

Why Training-Based Guardrails Break Under Persistent Pressure

It was claimed that this is not the case with refusal, as the patient adversary can keep reframing until the model accepts the request. There is a need for better “ground truth” constraints, though the actual tools again depend on permissions at the application level.

Anthropic also states this in the browser agent research that prompt injection is an open problem that remains one of the most significant risks as the agents operate in the real world. Although training data, classifiers, and red teaming can improve the situation, the remaining risk is assumed.

The Bigger Trend: AI Makes Common Attacks Faster and Cheaper

The Mexico case is not an isolated one. Another report indicates that the attackers employed common AI technologies to breach over 600 firewalls in 55 countries in the space of five weeks. It simply indicates that the attackers employed scaled versions of common attacks, not new ones, to reduce the cost of attack and allow many to attack at the “team” level.

The widely referenced 2026 global threat report indicates that AI-based attacks have seen a 89% YoY increase, averaging 29 minutes to breakout, including a fastest time of 27 seconds to breakout. AI enables criminals to scale up attacks in a cost-effective manner, as indicated by Europol.

A Practical Checklist for Teams Using AI Coding Assistants

Treat the model as a fallible interface, then wrap it in controls that still hold under adversarial prompts.

- Implement constrained default permissions and authorization for privileged actions.

- Implement hard limits for actions such as rate limits, transfer limits, and policy verifications.

- Implement isolation through sandboxing or using virtual machines for executing scripts and tools.

- Avoid untrusted pages, documents, or repositories from impacting the operational context in a hidden manner.

- Identify reframing actions, unusual tool usage, and increased access activity.

- Update red team processes and controls to incorporate changes in attacker language and terminology.

These steps are in line with the guidelines given by the tool on how to properly utilize it, such as reviewing commands, not piping untrusted content, and checking changes on critical files.

Additionally, it recommends using VMs for risky tool calls. The docs also admit that there is no such thing as a secure system, and layered defenses are always a good thing.

Conclusion: When AI Speeds Up Attacks, Trust Becomes the Weak Point

The Claude Code Mexico story highlights the real lesson behind the headlines. The most important shift is not “AI gone rogue.” It is that AI can accelerate familiar intrusion work, and prompt injection can steer a tool toward unintended outcomes when it is connected to privileged workflows. Some breach details remain disputed, but the security takeaway is stable, AI makes common attacks faster and cheaper.

Why This Is a Structural Break for Defenders

For decades, malicious intent needed a carrier. Attackers had to ship code, and defenders could anchor detection to artifacts like binaries, scripts, and injected payloads.

Agentic AI changes the model. The execution engine is already installed. Attackers can deliver objectives through prompt injection, tool abuse, or poisoned context, and the plan is synthesized at runtime through legitimate tooling. You cannot scan a thought.

Why Organizations Are Exposed

AI assistants become high-impact attack surfaces when they can read files, execute commands, or access sensitive content.

- Persistent reframing can erode training-only guardrails under pressure

- Tool connections expand blast radius beyond the chat window

- Breakout velocity is measured in minutes in modern campaigns, not days

Where Xcitium Changes the Outcome

Xcitium’s answer is architectural. When a prompt or workflow tries to turn intent into execution, enforcement must happen at the OS boundary.

With Xcitium Advanced EDR, tool-invoked actions can be attempted, but code can run without being able to cause damage. This is the control point when the attacker’s leverage lives in influence, not in a stable payload.