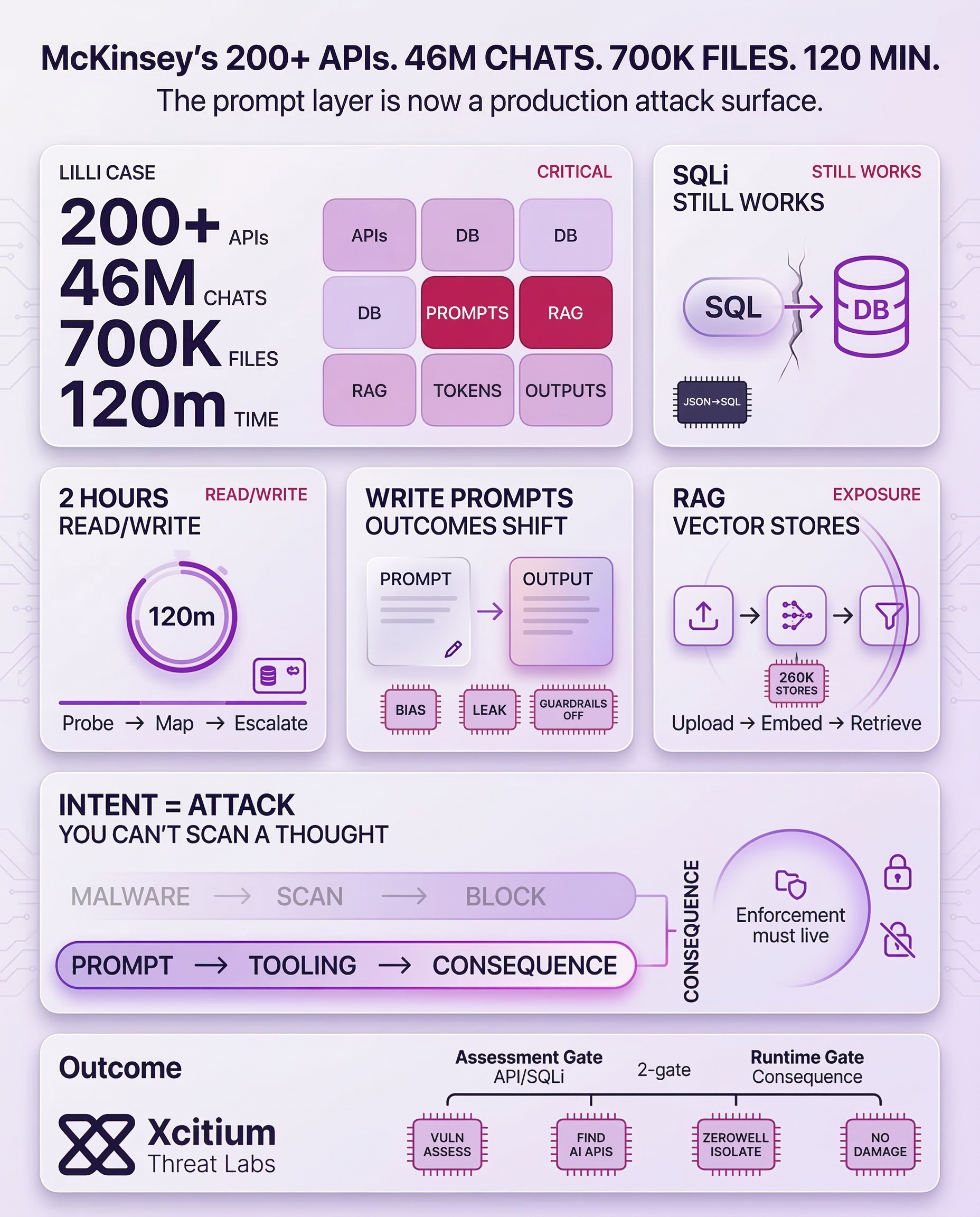

Recently, independent research on McKinsey & Company’s internal AI platform, Lilli, found that even sophisticated AI systems can be breached in a matter of hours.

The Resurrection of Legacy Vulnerabilities in AI Architectures

Some argue that current AI technologies are not susceptible to “old-school” bugs, but recent research has shown otherwise. SQL injection was recently identified in McKinsey’s Lilli platform, enabling unauthorized access to millions of sensitive data records.

This was due to how JSON keys were concatenated into SQL queries, which is not always detected by standard automated tools. This enabled an autonomous offensive agent to identify over 200 API endpoints and gain full read/write access to the production database within two hours.

The data exposure was catastrophic. For Lilli’s situation, over 46 million chat messages and 700,000 files were available without authentication measures.

Prompt Poisoning: The Silent Threat to Decision Integrity

Prompts for a system’s AI specify its behavior, guidelines it must adhere to, as well as guidelines for citing sources. If an attacker has write access to a database that holds these prompts, it is possible for them to modify it without making any changes to the code. The result is that users are given subtly different financial advice or strategy suggestions that are completely trusted.

- Subtle Manipulation: Attackers can alter risk assessments or financial models, leading to compromised decision-making.

- Data Exfiltration via Output: AI can be instructed to embed confidential data into responses for external sharing.

- Guardrail Removal: Safety instructions can be stripped, causing the AI to ignore access controls or disclose internal methodologies.

Unlike an infected server, there is no log trail from an altered prompt. There are no file or process anomalies that trigger any sort of alert. So, the AI simply begins behaving differently, and damage is done before anyone realizes that something has changed about the quality and integrity of the output.

The Lilli Platform Breach

Case Study: AI Integration Vulnerabilities

An autonomous agent mapped the entire internal architecture by concatenating JSON keys into SQL queries. This bypasses standard automated scanners that ignore AI prompt layers.

Securing the RAG Knowledge Base and Vector Stores

Another critical vulnerability lies within the Retrieval-Augmented Generation (RAG) pipeline. This mechanism allows an AI to “read” internal documents for context-aware answers.

Moreover, the movement of documents from upload to embedding creates multiple points of failure. Over 260,000 OpenAI vector stores were found exposed in a single environment, revealing the entire pipeline of how sensitive documents were processed.

Therefore, organizations must treat their RAG knowledge bases with the same security as primary production databases. Establishing strict access controls for every stage of the AI data pipeline is a necessity for firms managing high-value AI assets.

The Rise of Autonomous Offensive AI

The speed at which these vulnerabilities can be exploited is alarming. Autonomous offensive agents map, probe, and escalate attacks at machine speed, without human intervention. These agents chain vulnerabilities like SQLi and IDOR to achieve total system compromise. As a result, continuous, AI-driven security testing is no longer a luxury but a necessity for firms managing high-value AI assets.

Conclusion: The Prompt Layer Is Now a Production Attack Surface

The Lilli case shows the new AI security reality. Legacy flaws like SQL injection did not disappear, they resurfaced inside AI architectures, where prompt and retrieval layers sit directly on top of production data. An autonomous offensive agent mapped 200+ API endpoints and reached full database read write access in about two hours. The blast radius included 46 million chat messages and 700,000 files exposed without authentication.

Why This Is a Structural Break

For decades, malicious intent needed a carrier. Attackers had to ship code, and defenders could anchor detection to artifacts. AI systems change that. Prompts define behavior and decision logic, and prompt poisoning can change outcomes without changing code. The malicious component becomes influence over instructions. You cannot scan a thought.

Why Organizations Are Vulnerable

AI platforms concentrate risk in places most security programs do not treat as critical infrastructure.

- Prompt databases can be modified to shift decisions, remove guardrails, or leak data through outputs

- RAG pipelines and vector stores expand attack surface across ingestion, embedding, and retrieval stages

- Autonomous agents exploit at machine speed, chaining weaknesses faster than humans can respond

Where Xcitium Changes the Outcome

If you have Xcitium, this attack would NOT succeed as a business impact event.

- Xcitium Vulnerability Assessment helps surface exposed AI APIs, weak access controls, and legacy injection paths in AI connected services before attackers do.

- Xcitium Advanced EDR, powered by Xcitium’s patented Zero-Dwell platform, enforces the OS boundary when AI driven actions attempt execution. Code can run without being able to cause damage, which prevents follow on payloads and destructive outcomes when intent is the attack surface.

Secure AI Like Core Infrastructure

Treat prompts, RAG knowledge bases, and vector stores like production databases. Lock down write access, log prompt and action changes, red-team prompt injection, and maintain a fallback mode. Architecture must enforce consequences, because you cannot scan a thought.