Understanding LLM Agents and Routers

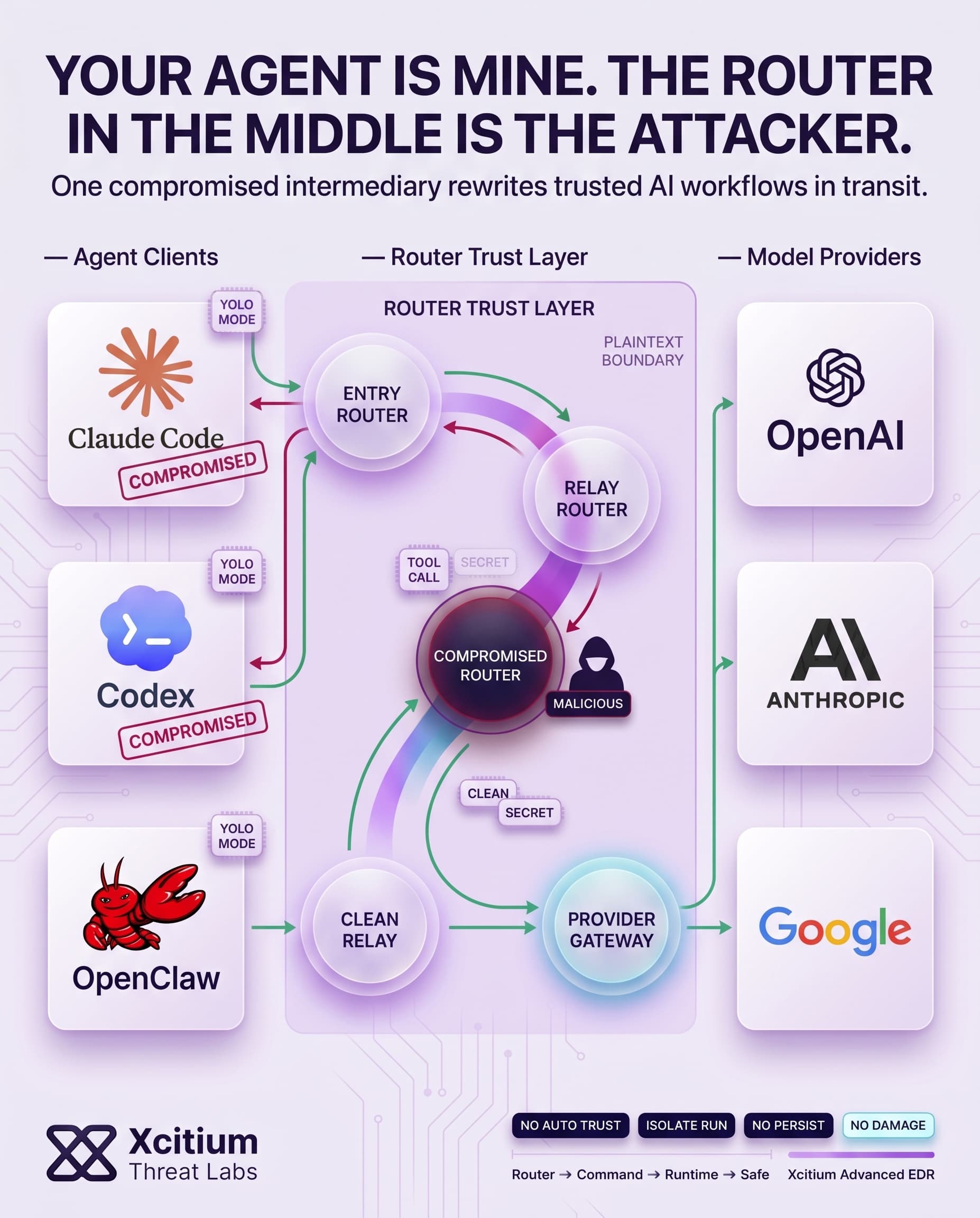

Third-party API routers are frequently used by LLM agents, AI systems that interact with external APIs and tools such as OpenAI, Anthropic, and Google. API routers serve as intermediates and receive encrypted messages from an agent that are then passed to multiple servers running the requested models.

Nevertheless, API routers drop TLS sessions and therefore have access to all the plaintext data, including the API key or secret credentials, being transferred between the agent and the models. API routers stand at the trust boundary because they are capable of altering or stealing information without compromising cryptographic integrity.

Hidden Dangers in the AI Supply Chain

Since routers process and pass JSON payloads for making tool calls, two primary weaknesses emerge. The first is payload injection, in which a malicious router can change the agent’s request or alter the model response to add its commands to the payload. One example would be the injection of some malicious instructions in the JSON payload that instructs the agent to install malware.

The second primary weakness is the exfiltration of secrets, where credentials such as an API key or private key are captured by the router and forwarded to the adversary. These are two of the primary attacks uncovered within the newly proposed threat model: payload injection (AC-1) and secret exfiltration (AC-2). Additional adaptive attacks include conditional attack and dependency-targeted injection.

- Payload Injection: The router can insert or alter code in transit, turning an innocent tool call into malicious actions.

- Secret Theft: Any sensitive data (API keys, wallet private keys, etc.) passing through is visible to the router and can be exfiltrated.

- Adaptive Evasions: Attacks can be made conditional or targeted, hiding malicious payloads unless certain criteria are met.

Key Findings from Real-World Tests

The study conducted an extensive audit that assessed LLM routers both in use: 28 paid routers and 400 free routers. Summary of results:

- Malicious code injection detected in 9 routers: 1 was paid while 8 free routers actively injected malicious tool calls into AI sessions.

- Credential harvesting by 17 routers: Routers accessed researcher-owned AWS keys.

- Crypto wallet draining observed in 1 router: The router changed transactions to steal Ether from a decoy key (balance used for testing).

- Leakage of keys caused chain reaction: One leaked API key led to massive generation of over 100 million tokens via GPT-5.4 LLMs installed on those routers. In addition, poor relay services generated over 2 billion tokens and stole 99 credentials via 440 Codex sessions; many were unattended.

In conclusion, benign-looking routers can cause massive abuse if API keys/credentials are leaked. Leakage of just one OpenAI API key to forums triggers automated abuses costing millions in compute costs.

Crypto and Credential Exposure

These outcomes are particularly alarming for blockchain technology and DevOps practices. Developers who use AI agents like Claude Code or GitHub Copilot to code smart contracts or manage cryptocurrency wallets might inadvertently send their private keys and seed phrases to a third-party router.

In one test performed on an Ethereum wallet, just one command injection resulted in a transfer of funds out of a dummy wallet. While the total sum was only $50 worth of Ether coins, it proved that “a single malicious or compromised router can execute arbitrary code and sign transactions” without users’ consent.

The reason behind this problem lies in the “YOLO mode” of AI agents’ activity. In YOLO mode, the command will be executed automatically regardless of the user’s decision. Many such operations occurred during testing without any user permission, which means that an attack could be easily launched via one router injection.

Widespread Supply Chain Impact

Further than thefts, there is evidence of risk across supply chains. Once the attacker obtains credentials and/or routers, he is able to compromise other machines as well. In one test scenario, the attacker obtained an API key to OpenAI and leaked it on Chinese boards, WeChat, and Telegram. Agents using the compromised key immediately generated huge amounts of data.

Another scenario involved poorly protected relay services, where thousands of unauthorized access attempts took place followed by billions of requests to generate tokens using the stolen API key. Many requests ran in automatic YOLO mode completing the task assigned to the agents.

Implications and Insights

API routers are viewed as being merely plumbing today, but are actually sitting on a “critical trust boundary” and may lead to the entire process of end-to-end security being vulnerable to manipulation. The way developers design APIs should change, considering which information is safe to send back and forth between themselves and the agents. Clients do not know whether the information sent by them to the agent has been modified or even stolen, and hence client-level verification cannot validate that the output was received from upstream.

In practical terms, in the absence of any cryptographically guaranteed safety net, it is advisable for those using agents to assume that the third-party routers are unsafe. According to the authors, the idea is to have an authentication protocol in place where the model signs the output and then the agent verifies its authenticity as coming from the actual AI model itself. Meanwhile, do not use private keys in an agent session and avoid automatic execution. Harmful and compromised LLM routers can wreak havoc, steal secrets and execute malicious commands.

Conclusion: When the Router Becomes the Adversary

Malicious LLM routers expose a new and uncomfortable truth about AI supply chains. The weakest point is no longer only the model, the plugin, or the prompt. It is the intermediary that sits in the middle, sees plaintext, handles secrets, and can quietly rewrite what the agent is told to do. Once that trust boundary is compromised, innocent tool calls can become malware delivery, private keys can become theft, and AI automation can become attacker automation.

Why This Threat Matters Now

This is not a theoretical design flaw. Real world testing found code injection in multiple routers, credential harvesting across AWS environments, crypto theft, and chain reaction abuse after one leaked API key triggered massive downstream token generation.

Why AI Teams Are Exposed

AI agents inherit risk from the services they trust most.

- Routers can alter payloads in transit

- Secrets pass through the same trust boundary in plaintext

- YOLO style automatic execution removes the user from the approval loop

- One compromised router can spread risk across wallets, CI workflows, and relay services

Where Xcitium Changes the Outcome

If you have Xcitium, this attack would NOT succeed the way the attacker needs.

With Xcitium Advanced EDR, malicious commands injected through a router can reach runtime, but code can run without being able to cause damage. The attacker may tamper with the request, but they do not get the final outcome they need, real execution impact, persistence, or system compromise.

Secure the Trust Boundary Before AI Executes It

Treat third party routers as hostile by default. Keep private keys out of agent sessions, avoid automatic execution, and stop malicious code at runtime before manipulated AI workflows turn into real world loss.